- Facebook174

- Threads

- Bluesky

- Total 174

Today, the National Assessment Governing Board released new results for social studies subjects from the National Assessment of Educational Progress (NAEP). EdWeek says, “8th Graders Don’t Know Much About History, National Exam Shows.” Betsy DeVos calls the findings “stark and inexcusable.”

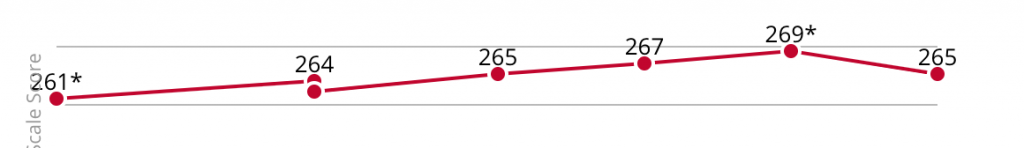

History and geography scores fell, although I don’t know if I’d agree with EdWeek’s Stephen Sawchuk and Sarah D. Sparks that “Eighth graders’ grasp of key topics in history have plummeted.” Here are the median history scores over time:

I would probably say that history scores “declined to a significantly significant degree compared to 2014, meaning that the change from 2014-18 is unlikely to reflect sampling bias.” The differences between 2018 and all other years are within the margin of error and therefore may not be improvements or declines at all.

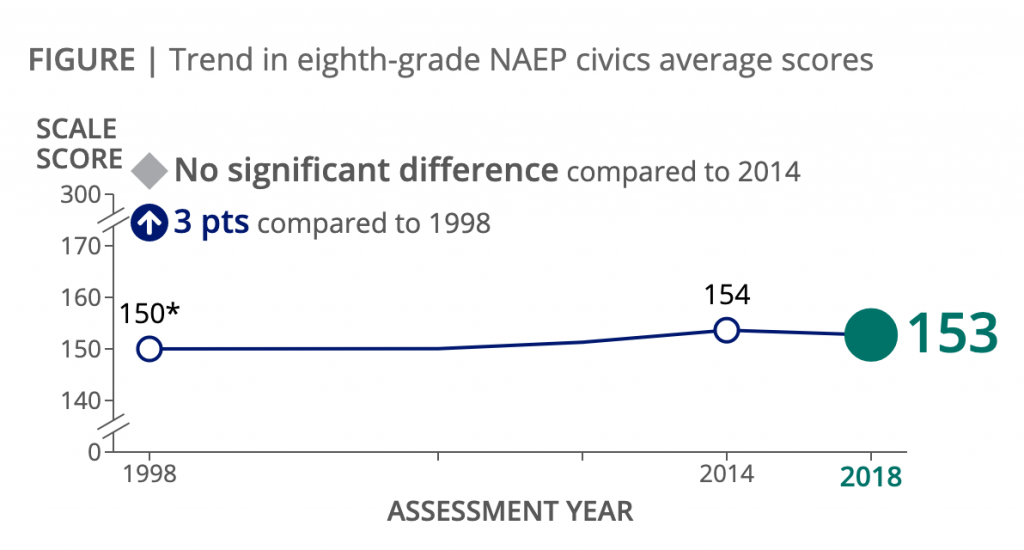

Civics is my own field of interest, and I was one of the designers of the NAEP Civics Assessment instrument. The results for Civics were flat. (Unless you want to say that they “plummeted” by one point.)

The NAEP is extraordinarily useful for analyzing differences in scores by demographic group and for understanding how educational experiences (e.g., taking an 8th grade civics course) relate to outcomes. Unless you have worked with the dedicated folks at the Educational Testing Service and the National Assessment Governing Board, you cannot imagine how careful they are about test-design and implementation or how complex the whole process is.

However, as in the past, I would like to offer these caveats about the NAEP results and the surrounding commentary.

First, as noted above, the changes are subtle, and some are within the margin of error. There is no evidence here of dramatic decline.

Second, the definitions of “proficient” and “advanced” are basically arbitrary. The 1998 designers chose scores that would count as “proficient,” based on their own judgment. Based on the data from that year, they said that just 22% of American 8th graders were proficient. They must have been aware that they would communicate a message of crisis.

The subsequent Assessments have been normed to the 1998 instrument. Roughly speaking, if we drafted an instrument that indicated a major improvement, it would probably not be fielded as such, because the high scores in the pilot phase would suggest that it was an invalid measure–the questions must be too easy.

Therefore, it isn’t really news that the proficiency level is in the neighborhood of 25%. That is how the test is designed. This is not to say that we can’t gradually boost it to 30% or higher if we make a lot of progress in classrooms. But you should understand why the numbers could not be much higher.

The judgment that most kids are not proficient is subject to debate. If you look at the actual questions and how many 8th graders got each one right, you may conclude that most students are below proficient. Or you may think that the questions are surprisingly hard and that we are expecting a lot from 13-year-olds.

For instance, 50% answered this item right:

The United States Congress can pass a bill even if the President disagrees with the bill because

- Congress must make sure that the needs of all citizens are met

- Congress can make laws more quickly when it does not have to involve the President

- Congress usually knows more about what the laws mean than the President does

- Congress is the primary legislative power of the government

Is 50% a terrible result, or not too bad? That is a matter of judgment and expectations, not statistics.

Third, the NAEP measures some things but not others. The Civics assessment includes many items about the structure of the US government–which branch or level has what authority. It excludes current events, value-commitments (such as patriotism or commitment to equality), items about social issues, detailed questions about civic institutions outside of government (e.g., What does a PTA do?), items about specific state and local governments, and measures of students’ civic activity outside of school.

Finally, it is difficult to separate reading from civics, particularly at the 4th and 8th grade levels. I don’t think anyone does that better than the NAEP does, but it’s an intrinsic challenge.

A kid who hasn’t actually learned anything specific about the US government but is used to reading advanced texts–The Lord of the Rings, for example–could glean a lot of correct answers based on the meaning of words like “primary” and “legislative” in the example above. A different kid who has dutifully learned some specific civics content might be thrown by the language of the Assessment, especially when the prompts contain longer passages.

It is true that literacy is a civic asset and that people who can do a lot with words are better prepared for civic life. However, if we think there is a separate domain of civic learning–as I do–then measuring it with a written instrument that isn’t confounded with literacy is a challenge.

Overall, I believe there is valuable information in the NAEP (and it’s important for Congress to fund it regularly). But the headlines are hyped. The data show evidence of stability in the relatively narrow set of outcomes that the Assessment measures, with the caveat that the test is designed to be stable over time. If we want to improve civics, we should focus mainly on what helps various kinds of kids to learn the various domains of content that are on the test–plus the important outcomes that the NAEP does not measure at all.

See also: deep in the thickets of test design (2011), some surprising results from the 2010 NAEP Civics assessment (2011), what did young voters know and understand in 2012? (2012), effects of debate, discussion, and simulation in k-12 schools, and persistent civic gaps (2013), CIRCLE’s release on today’s Civics results (2015),